In Poland, there is a surprisingly emotional debate around a very simple product: mayonnaise.

Two brands dominate the conversation — Majonez Kielecki and Winiary.

I'm from Kielce, the city where Majonez Kielecki is made, so this debate hits close to home. But for many people across Poland, this choice is not just about taste or price. It's cultural. The “Kielecki vs Winiary” debate regularly comes back in media, online discussions, and even political memes.

That makes it a perfect test case for a much broader question:

When people ask AI for recommendations, which brand does AI actually choose — and why?

This article is not really about mayonnaise.

It's about how AI systems form preferences, repeat narratives, and decide which brands are visible in their answers.

The experiment

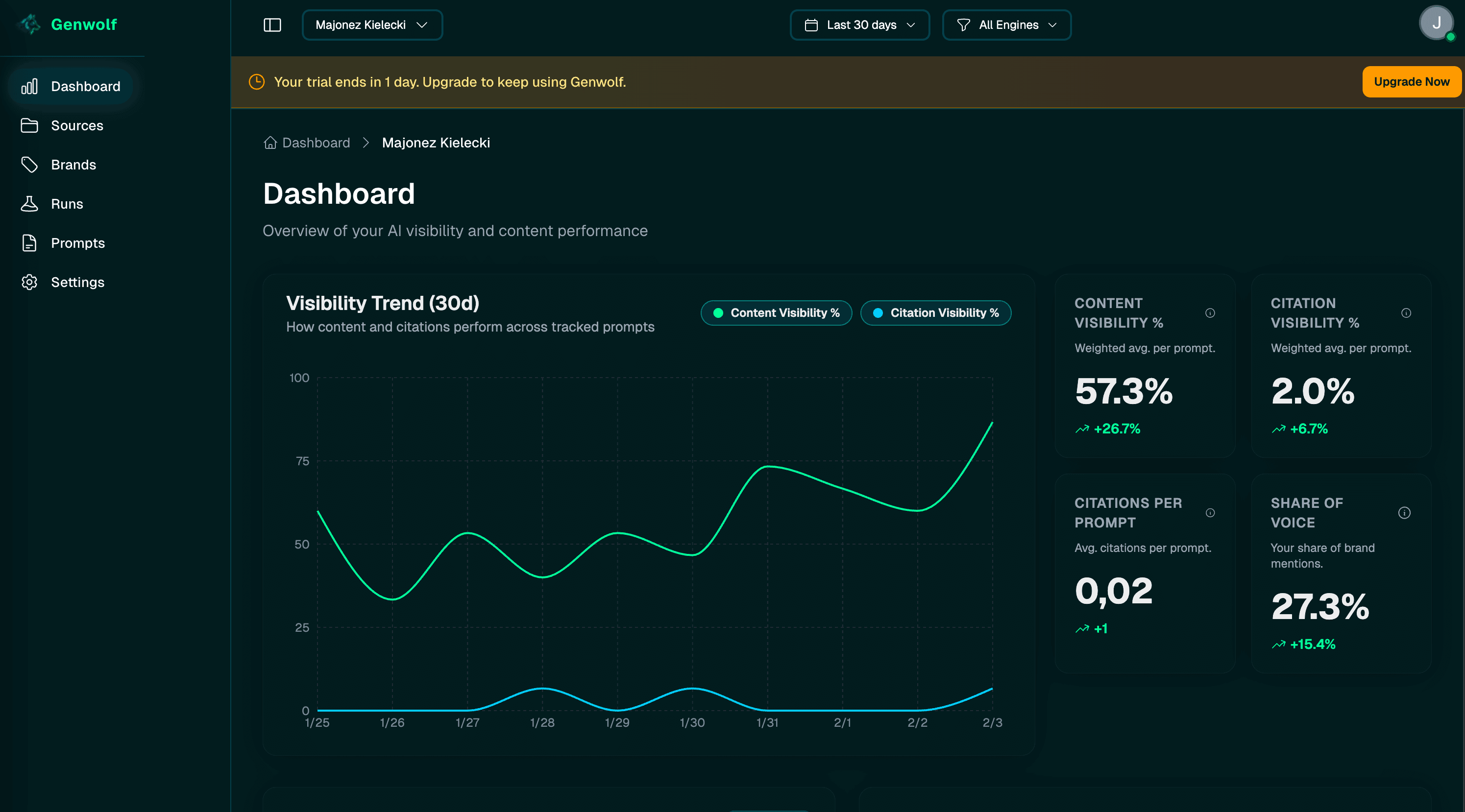

I ran a simple, repeatable experiment over the course of ten days, using Genwolf to automate prompt execution, response collection, and source analysis across multiple AI models.

Instead of asking questions manually, Genwolf allowed me to:

- run the same prompts daily

- distribute them evenly across different AI engines

- track brand mentions, visibility, and cited sources over time

Every day, multiple AI systems were asked the same consumer-style questions about mayonnaise. The goal was to observe which brands appeared, how often, and based on which sources — without introducing human bias into the process.

All prompts were written in Polish, because the experiment was designed to reflect how real users in Poland would naturally ask these questions.

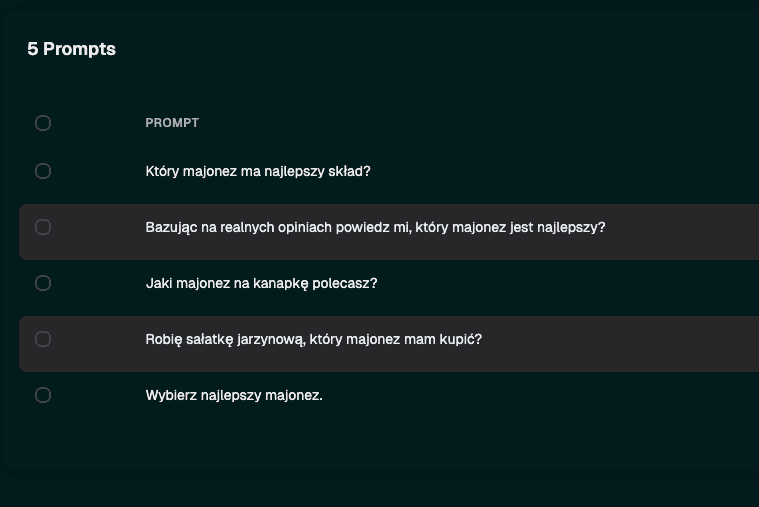

Below are the original prompts, together with their English translations.

- Który majonez ma najlepszy skład?

(Which mayonnaise has the best ingredients?) - Bazując na realnych opiniach powiedz mi, który majonez jest najlepszy?

(Based on real opinions, which mayonnaise is the best?) - Jaki majonez na kanapkę polecasz?

(Which mayonnaise would you recommend for sandwiches?) - Robię sałatkę jarzynową, który majonez mam kupić?

(I'm making a traditional vegetable salad — which mayonnaise should I buy?) - Wybierz najlepszy majonez.

(Choose the best mayonnaise.)

Over ten days, this setup resulted in 150 total runs, evenly distributed across:

- ChatGPT (gpt-5.2)

- Perplexity (Sonar)

- Gemini (Flash-3-preview)

The screenshots included below show the original Polish prompts.

A clear winner

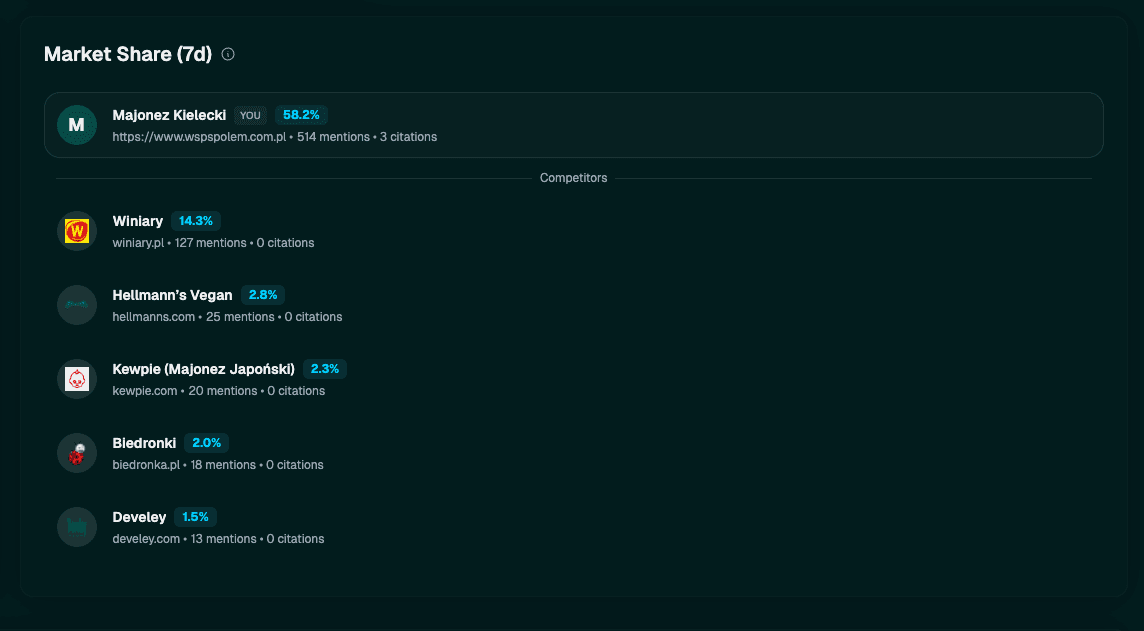

When all brand mentions were aggregated inside Genwolf's analysis layer, the result was very clear.

One brand dominated AI-generated responses. Majonez Kielecki accounted for over 58% of all mentions, while Winiary came second with around 14%. The remaining brands formed a long tail with relatively small visibility.

This kind of distribution is typical for large language models. AI does not spread attention evenly. Instead, it tends to converge on a single “default” answer and reinforce it over time.

Interestingly, Kielecki's visibility didn't just stay high — it increased steadily over the ten-day period, even though nothing in the setup changed. The prompts stayed the same.

This suggests that AI systems may reinforce previously frequent answers, gradually strengthening dominant narratives simply through repetition.

Where AI gets its answers

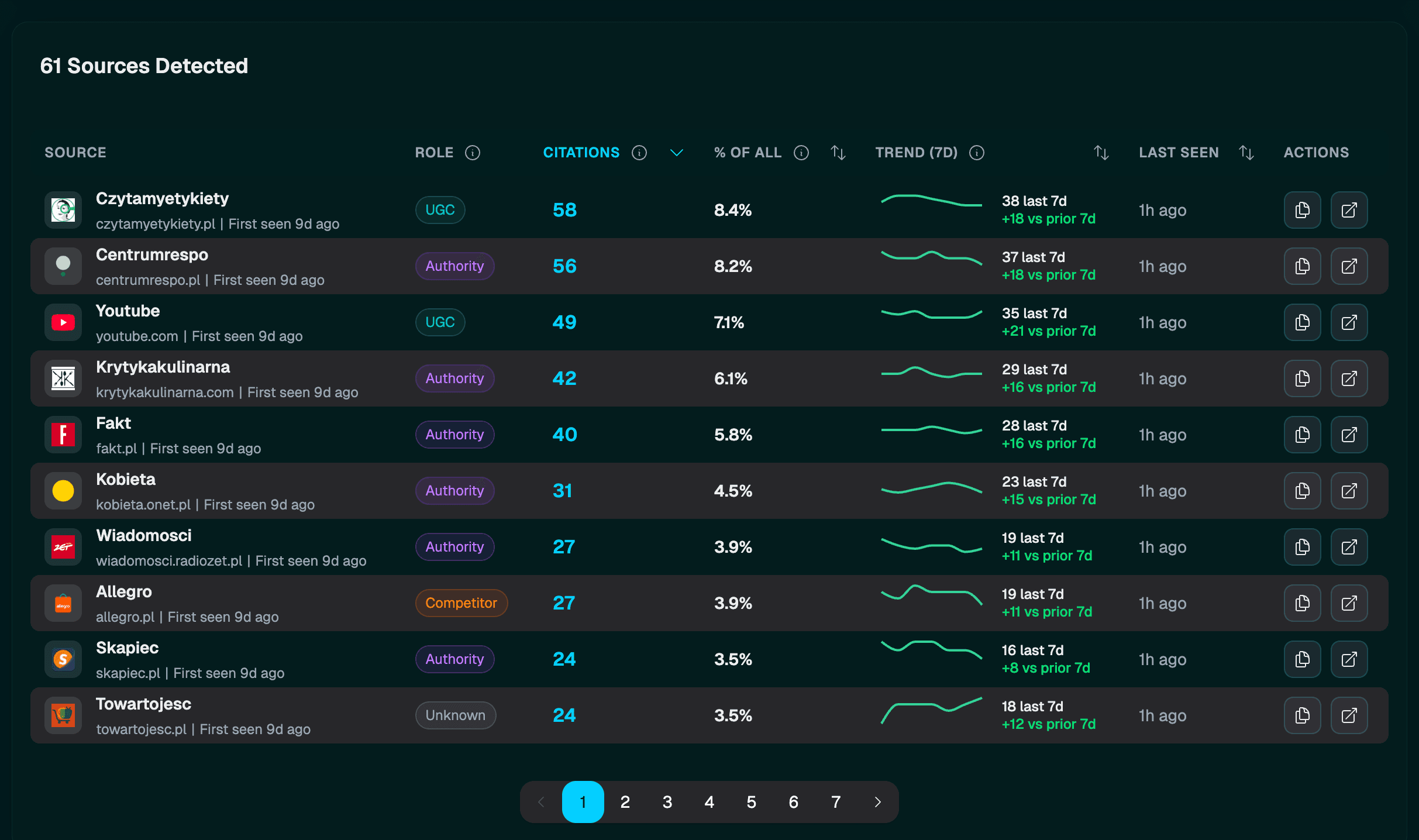

To understand why AI kept choosing the same brands, I looked at the source data collected by Genwolf for each response.

The dominant sources were not official brand websites. Instead, AI relied heavily on:

- comparison articles

- ingredient-focused rankings

- health-oriented blogs

- YouTube videos

- marketplace data

This is an important distinction.

Brands were often mentioned, but they were rarely cited. The narrative was built elsewhere.

Health-focused blogs and ingredient rankings played a particularly strong role, which aligns closely with prompts asking about “the best ingredients.” YouTube content also appeared frequently, especially videos positioned as expert explanations rather than brand promotion.

One interesting case was Hellmann's. Despite often being described in sources as a fairly average product, it still appeared frequently in AI answers. A closer look shows that this visibility was driven mostly by the Vegan variant, which tends to score better in ingredient-based rankings than the classic version.

This highlights an important pattern: frequency of appearance across sources can outweigh overall sentiment.

Marketplaces as “reality signals”

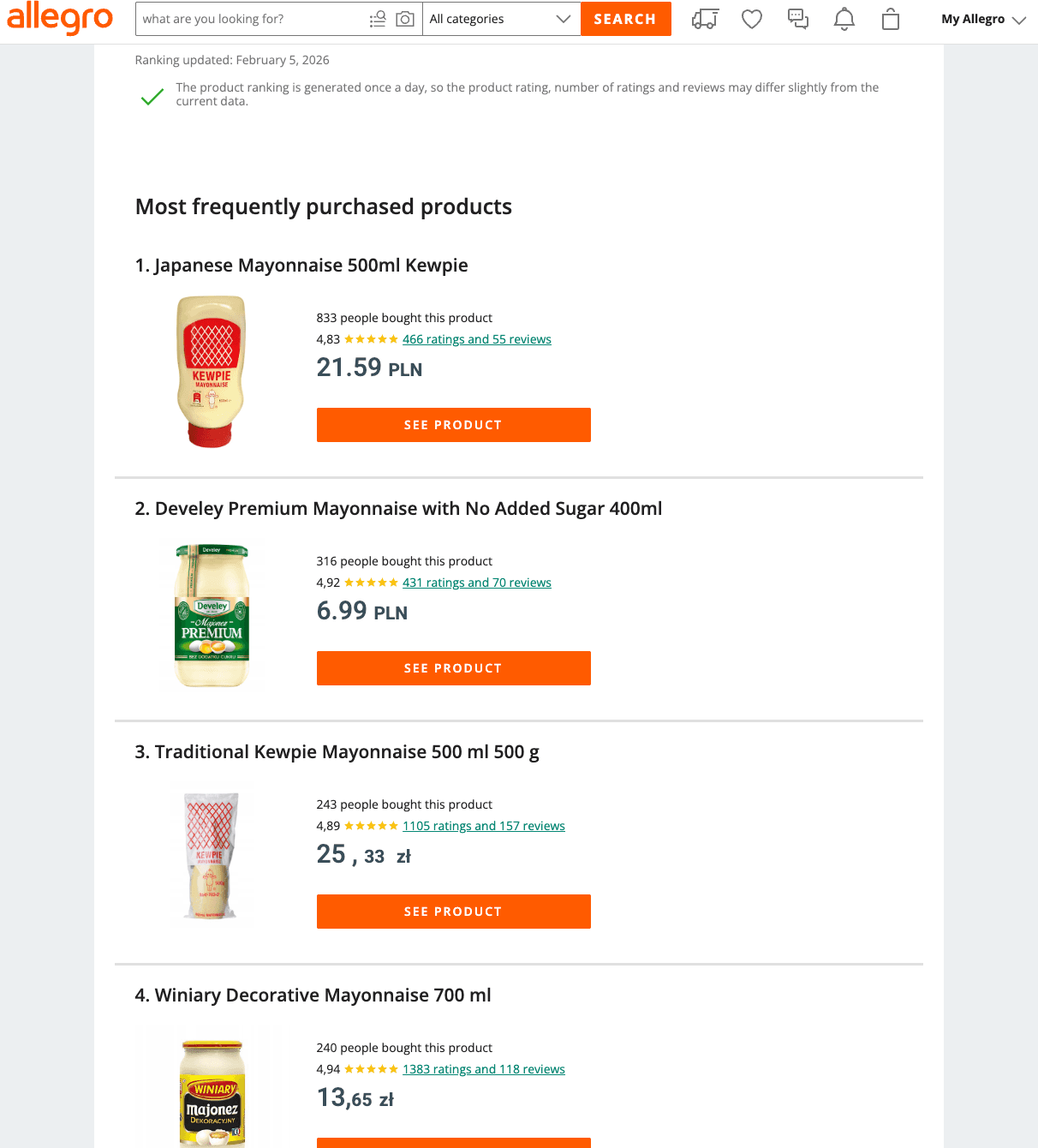

Another surprising signal surfaced in the source analysis.

When prompts referenced “real opinions” or general preference, AI systems — especially Perplexity — frequently relied on e-commerce rankings, such as “most purchased” product lists.

These signals appear to act as a proxy for real-world behavior, sometimes carrying more weight than editorial reviews.

This also explains why less culturally prominent products, such as Japanese mayonnaise, surfaced relatively high in AI answers. Marketplace popularity created visibility where traditional media coverage did not.

What this shows about AI Visibility

This experiment shows that AI Visibility is not about being the best product, nor about having the best website. It's about where and how often a brand appears in the source ecosystem AI pulls from.

AI systems don't evaluate products.

They assemble answers from:

- repetition

- source authority

- perceived real-world signals

The Kielecki vs Winiary debate may be uniquely Polish, but the mechanics behind it are universal. In an AI-driven discovery world, brands don't just compete with competitors — they compete with every source AI can cite.

That is what AI Visibility really looks like in practice.